New Paper on "Norm-Based Generalization Bounds for Compositionally Sparse Neural Networks"

September 21, 2023

We are excited to share our latest accepted NeurIPS paper, a collaboration with esteemed colleagues from MIT.

Title: Norm-Based Generalization Bounds for Compositionally Sparse Neural Networks

Authors: Tomer Galanti, Mengjia Xu, Liane Galanti, Tomaso Poggio*

Links: Download paper | BibTex

Overview

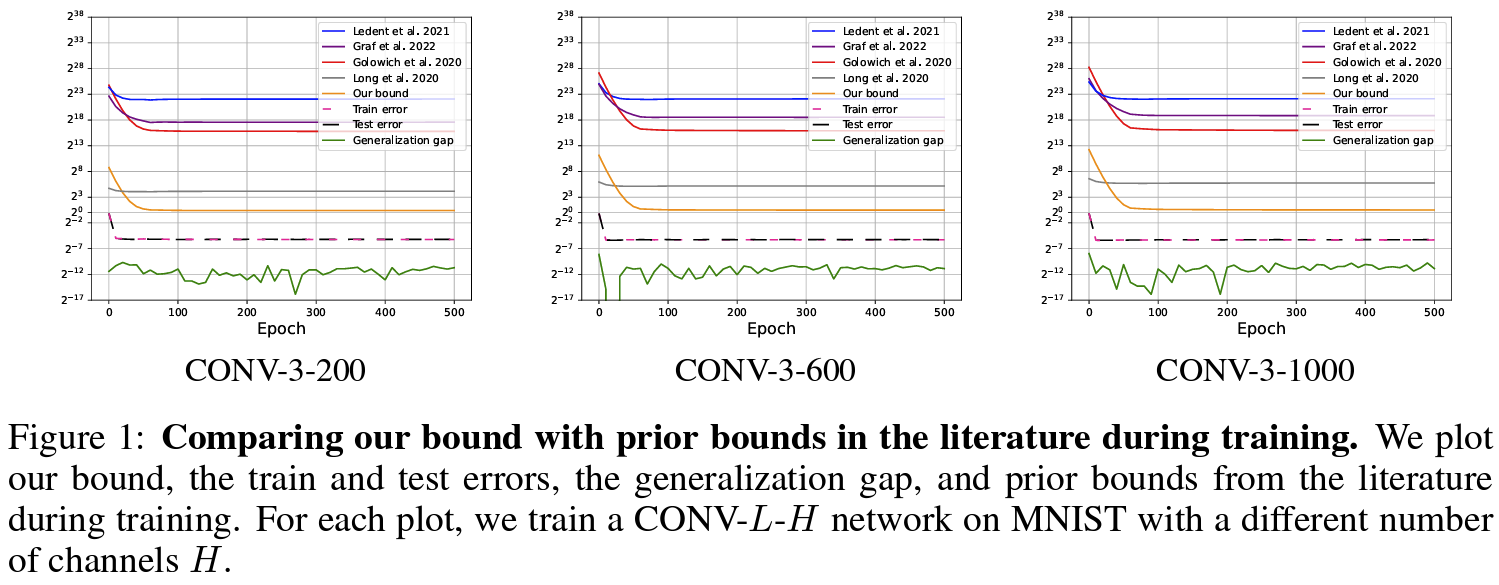

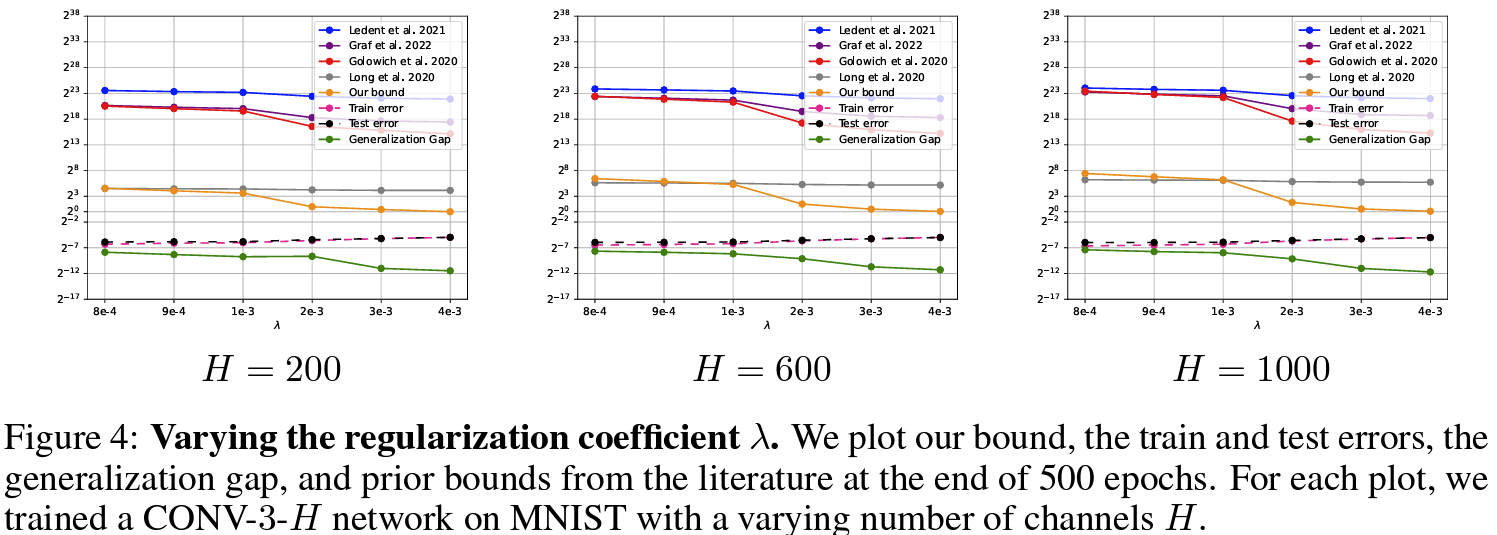

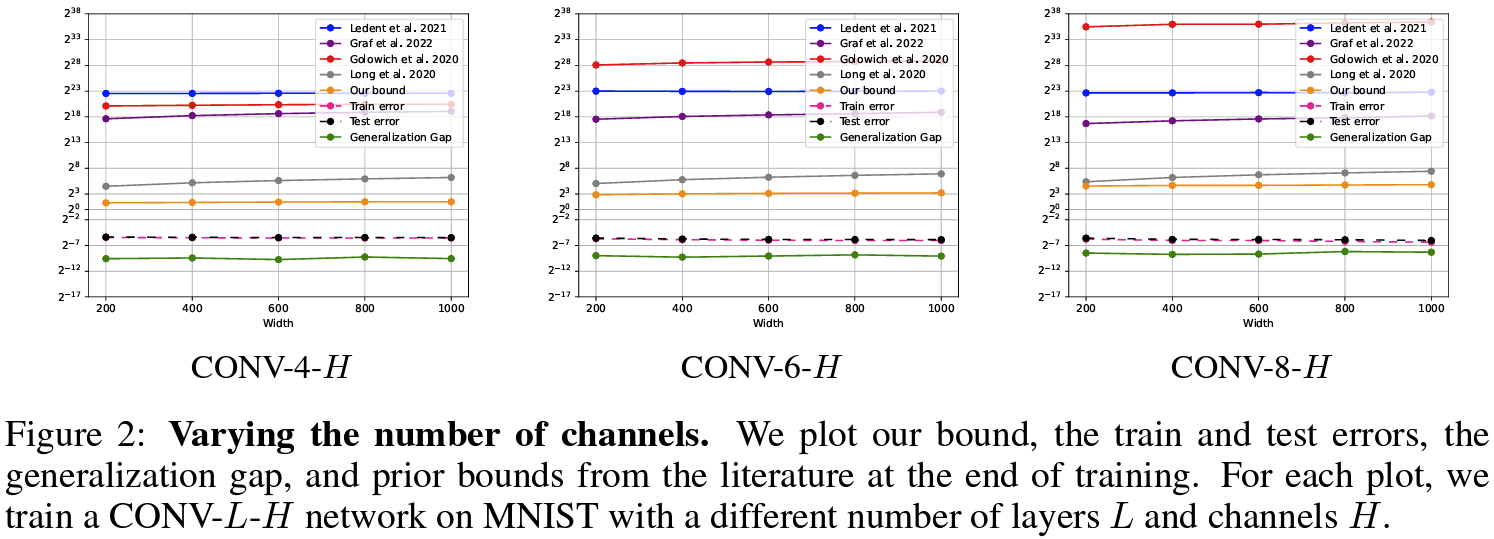

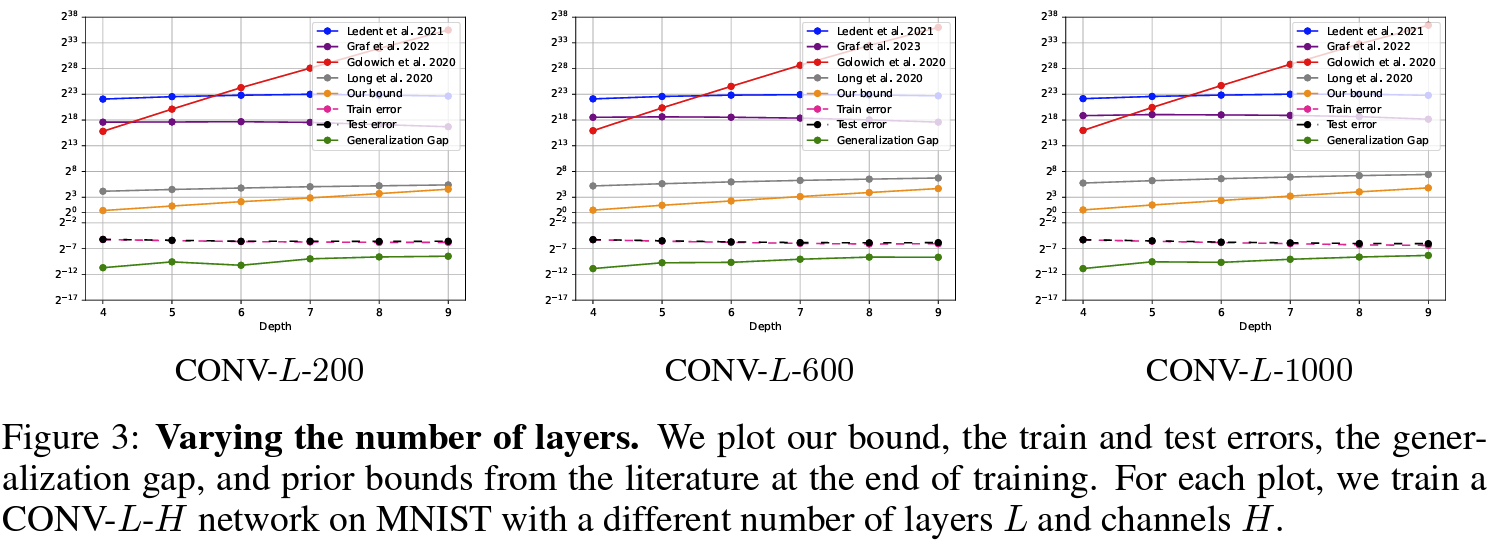

In this work, we present new norm-based generalization bounds for compositionally sparse neural networks, such as convolutional networks. Our main contributions include deriving significantly tighter bounds for sparse networks without relying on weight sharing and demonstrating that good generalization can be achieved with weak dependence on network size. Our experiments confirm that these bounds outperform existing ones, emphasizing the importance of network architecture on test performance. This work sheds light on why certain architectures excel, suggesting that sparsity is a key factor in their success.